AI slop has reached the publishing industry. For the first time, a commercial novel – ‘Shy Girl’ by Mia Ballard – has been pulled from a mainstream publisher, Hachette, as a result of potential AI use. The scandal has been described as the dawn of a new era defined by “deception”, “uncertainty” and a chilling sign of what is to come.

A new frontier

At the end of 2022, I bagged my first role as a Marketing Executive in the trade. AI was just about on the horizon. Any anxieties related to the technology felt minor, at least certainly in comparison to today. In fact, it was an exciting moment to enter the literary scene.

For many, the utter loneliness of the pandemic lockdown encouraged them to read. Even those who were typically reluctant to pick up a book found themselves swiping dusty covers and giving it a go. I remember one friend telling me she no longer had to feel like a fraud for calling herself a Marxist, because she’d actually read Marx.

I left that job in 2024 with the understanding that literature helps us survive when intimacy is taken away. I say ‘survive’ because that is how the absolute loss of the human connection feels, like an existential threat. Time has barely passed since I left the industry.

Perhaps I was naive, but the possible harm that publishing faces today is not one I could have conceived at the time, mostly because I considered that readers were hungry for human perspectives. I believed that these were the only perspectives that could produce writing worthy enough to reach an editor and eventually an audience.

Of course, I am sure most readers are still only interested in reading human-authored works. But it is easy to disregard the pernicious threat AI poses to publishers, authors and our consumption of books, in addition to the damaging effect it is already having.

Like a lot of people, I consider AI-writing to be bad: oddly mechanical and formulaic. Perhaps, as a young writer, I use this fact to negate the idea that a robot could replace me (half of UK novelists, however, believe it is possible). My research of the scandal and the impact of AI in general on the industry has complicated this simple response of mine, at least more than I am comfortable with.

The Shy Girl controversy

‘Shy Girl’ was exposed by an AI detection programme, Pangram, which indicated that the novel could be 78% AI-generated. The test itself was motivated by reader’s suspicions, who flagged certain areas of unusually repetitive prose. It is also true that other readers loved the book and believed it to be entirely human-authored. ‘Shy Girl’ managed to bypass an editorial team and a list of endorsers including author Olivie Blake, who described it as “audacious, inventive, and uniquely horrifying.”

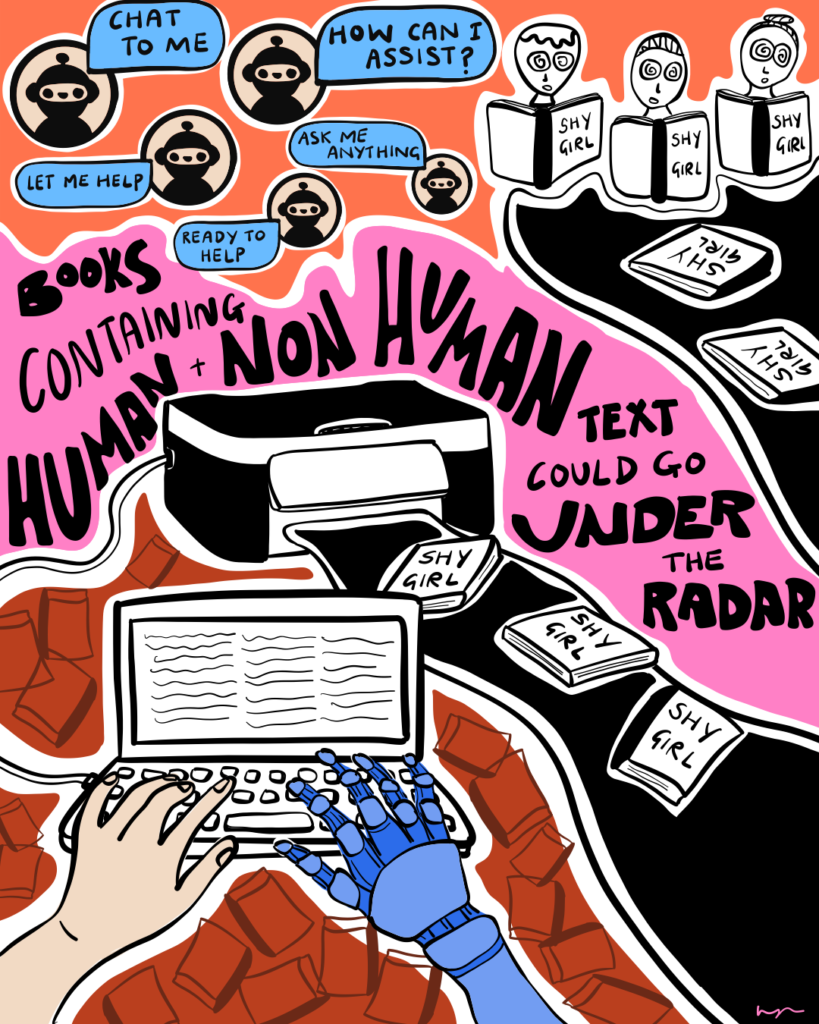

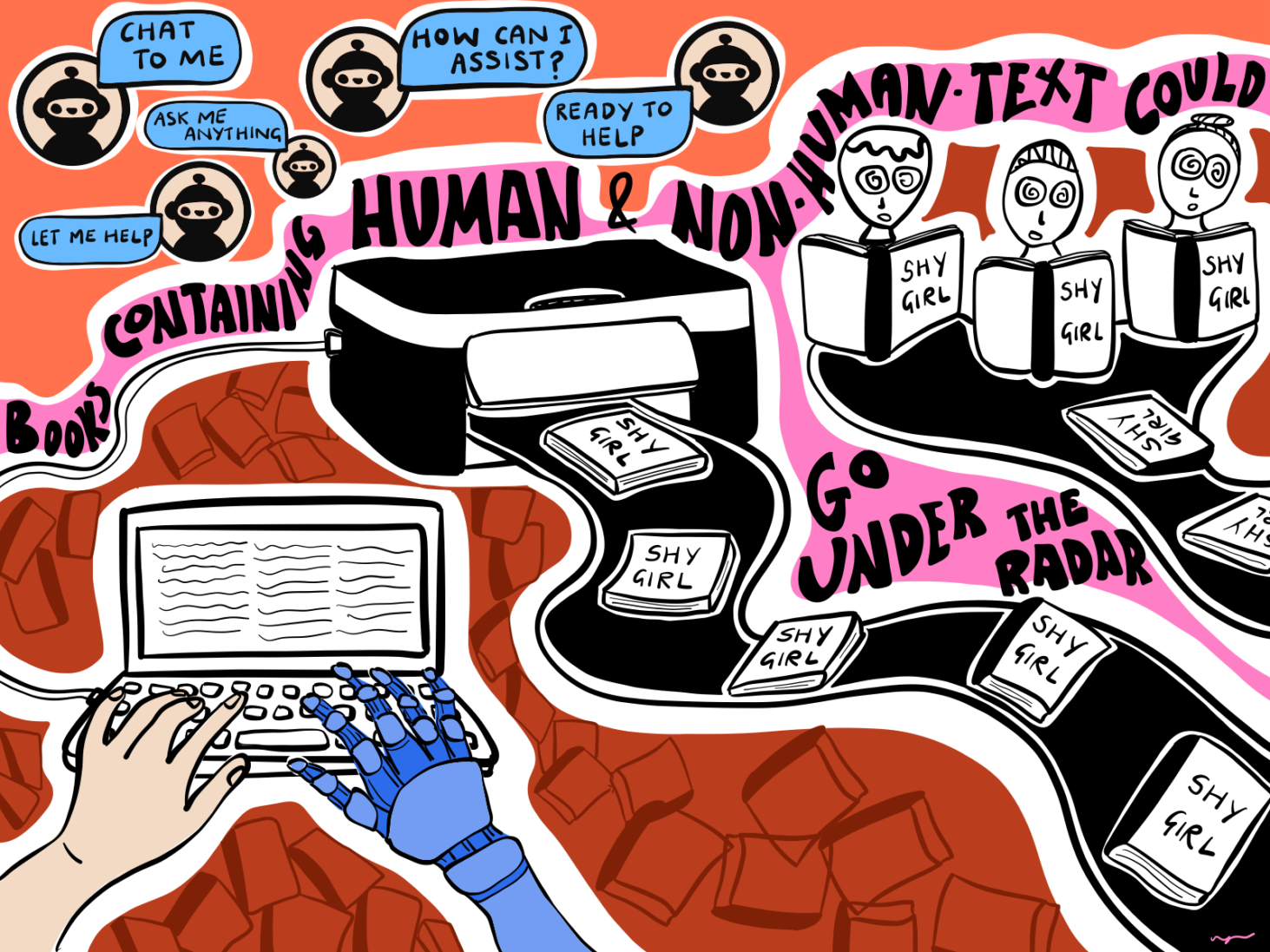

I can see how books which contain a mixture of human and non-human text, even if they are disproportionate, could go under the radar. There is already evidence to suggest that the lines are already blurring: humans write online; AI absorbs it; humans read it; eventually this changes what human writing sounds like.

Literary professionals are sounding the alarm, warning of a potential loss of an entire creative sector as a result of computer-generated writing becoming more common and increasingly difficult to detect. “Many of us are too frightened to talk about it”, Peter Cox, managing director of literary agency Redhammer Management, told The Independent in an interview about the scandal. “[But, AI] is enormously economically attractive [to publishers], especially if you produce genre fiction. You can just instruct Chat GPT to produce 80,000 words of romantasy and there you go.” Of course, publishers are businesses, and if they can increase their output without spending more money, there is little incentive to stop them; it is usually a sales forecast, rather than artistic or cultural value, that convinces a publisher to seal the deal with an author.

I am not a robot

Three years ago, hundreds of AI-generated ebooks flooded the market, including child fiction, and since then, some experts suspect this has dramatically increased. At the start of this year, The Society of Authors, a union for writers, developed a scheme to introduce a government-backed ‘Human Author’ logo to identify all automated books.

Cox and other literary professionals claim that the influx of these books can be attributed to the steady decrease in authorial income over the past five years: one-third of novelists suspect their earnings have been affected by AI displacement, claiming that GenAI in particular pirates and scrapes their work to train its models.

Because of the fallibility of AI-detectors, it is difficult to know precisely the quantity of books on the market that are automated. Yet authors are already concerned they could be competing with the technology, and that this could intensify as it becomes more pervasive, displacing their labour entirely.

Essentially, this means that authors who use AI could eventually be driving themselves out of the business, creating new standards of efficiency human writers cannot fulfill. This should act as an urgent call for all literary professionals to not use the technology, particularly publishers who could be speeding up the process, while at the same time diminishing the quality of their books.

But this is not just a problem for art workers. The loss of culture affects us all. Art is not merely a reflection of politics and power; it is material, a tool through which power is moved. I cannot help but feel that the rise of AI-generated books speaks to the way in which politics is currently organised to affect us – forces which intend to obfuscate our humanity; the significance of diversity in maintaining our own survival. Most troubling, it speaks to the potential erosion of critical thought, our ability to identify when those forces are at play.

Fascism and cultural constraint

A recent study led by the Massachusetts Institute of Technology suggests that ChatGPT “makes our brains less active and our writing less original.” This is hardly much of a surprise. Still, there is something sinister about the way in which AI smooths over and obscures variation, especially if you take into account the destruction the tool produces (one AI company, Anthropic, has apparently destroyed millions of physical books in the process of training its model).

My research for this essay coincided with a book I was reading for ‘pleasure’, entitled “The Nazi-Fascist New Order for European Culture”, by Benjamin G. Martin.

I had arrived at this collection of essays with images of Nazis burning books etched into my mind. I was curious to learn that the regime, in fact, did not intend to destroy culture entirely; areas of it they aimed to maintain, the forms they believed to reflect racial integrity. Fascists, I learnt, utilise culture; except for them, it is a project which sets out to constrain the arts, weaponising its expressions to further entrench their idea of a singular belief, the unified nation.

The comparison I am drawing here is not to suggest that only political fascists are behind the technology which is turning our brains into a shared mush: AI is a business model as much as, now, a political project. It is certainly illuminating, though, how much the right uses the tool to spread their myopic, violent propaganda, or even affect regimes. Take Donald Trump’s grotesque automated videos in which he presents his ‘new’ vision for Gaza following its obliteration, or his use of Anthropic’s Claude AI model, which – shockingly – was used to identify targets to bomb in Iran. Clearly, the right perceives AI as a way to influence and control events, but also flatten how they appear, and suppress our political consciousness as a result.

If we allow the influence of AI on the production of books to continue, as professionals in the industry have already warned, then everything about how the technology is mobilised points to the depletion of the author’s individual identity.

Join our mailing list

Sign up for shado's picks of the week! Dropping in your inbox every Friday, we share news from inside shado + out, plus job listings, event recommendations and actions ✊

Sign up for shado's picks of the week! Dropping in your inbox every Friday, we share news from inside shado + out, plus job listings, event recommendations and actions ✊

A shift of this nature would signal the potential for cultural homogeneity, which makes us all easier to exploit and less resistant to extremist ideology. This is why AI is such a seamless tool for the right: it makes all of this seem possible. Worse than that, it is already programmed in ways that inevitably lead to prejudiced outputs; AI is constantly doing the work for them. The tool is trained on pre-existing data, which research has shown is inherently biased against ethnic diversity, progressive gender roles, and sexual orientations. It is difficult to imagine the harm this could inflict on an industry that already falls behind on diversity and inclusion.

Earlier this year, unredacted files revealed that Anthropic developed a “secret plan” to “destructively scan all books in the world”, which is difficult to picture without hearing evil cackling in the background. Defiant authors who were included in the company’s dataset successfully filed a claim against the tech giant. They received a settlement, but it is unclear how the tech giant will proceed after this fact. Surely, this should act as a warning call. What might our society look like if no one stands in the way of a mass-scale corruption of art? And, what kind of world replaces it?

Exploiting human anxiety

If the output of AI-generated books, or AI in general, says anything about the fragility of culture, then it’s worth asking what motivates the human author to input their words and stories into that cycle. When invited to speak about the scandal, the literary agent Kate Nash concluded that this compulsion was about self-confidence: “Readers trust writers,” she stated. “Writers need to continue to trust themselves over machines.”

Clearly, AI has changed how we value, or place faith in, human creativity, because of the way it has complicated the idea that art is unique to us. I find this concerning because, from my perspective as a writer, the artist is already so insecure. Financially, yes. But, the creative process itself makes us innately vulnerable: emotionally, spiritually. Failure, or writer’s block, is part of the creative process. We all know this, but it doesn’t make it any less painful. That we do not have all the answers seems like the entire point of this process. It can almost seem like a contradiction that we have to make art to find out how to make it. AI exploits this anxiety in the artist: it suggests that we can avoid feeling it in order to generate better outcomes. Fragility is a thing replaced by efficiency.

In the age of AI, human creativity matters less, but also so does our care for other human beings. It is difficult not to believe that this development isn’t affecting us all, moving the goalposts as we stare down at our phones to see, again, something like Trump’s AI-generated propaganda; his rendering of the Iran war into a memefied video game, removing any detail of human cost, or even participation; his “streamlining [of] the kill chain”. The conditions for this dehumanisation to take place are all around us, from Palestine to Sudan, Myanmar to Kashmir.

The anxiety of being human

Ballard claims she did not personally use AI to write ‘Shy Girl’. Instead, she argues that she hired an editor who did. Either way, the story remains almost the same: it is about anxiety and doubt. Doubt over skill, doubt over productivity.

As a writer who doubts herself all the time, I can see why a machine might have felt like the best solution. But, is the machine a solution to the artistic problem itself, or simply the feeling of being inadequate? While something as solid and impenetrable as a machine enters our lives in more invasive ways, how we lack those qualities becomes more stark. It becomes more of a problem to solve.

During the early stages of writing this article, I thought frequently – for reasons I could not name at first – about my colleague at Vashti Media, Francesca Newton’s essay published by The Nation on the different forms, but also the unsettling ubiquity, of emaciation in the world. Emaciation as a political weapon, or, in the West, as a personal choice. “Anxiety is a nauseous sensation, and everyone feels it right now”, she writes. “If I learned anything from all that scrolling [on Instagram], it was that being human is a risk … it would feel better to be something hardier, like an AI model.”

Perhaps, on some emotional level, the influence of AI on culture in general shows us that faith in humanity is not a given, at least not anymore. Still, it has always been something we need to learn to uphold and protect.

What can you do?

- You can sign up to become a member of SoA here, and register your work as Human Authored.

- If you’re a self-published or indie author, the Authors Guild shares tips on how to protect yourself against unauthorised training uses.

- You can sign this letter to the Big Five publishers, which calls on them to promise not to use generative AI to create books.

- Buy books from your local independent bookshop! Here is a brilliant list of LGBTQIA+ presses and indie shops.

- Read ‘The Eye of the Master: A Social History of Artificial Intelligence.’

- Read more tech content on shado